As autonomous AI agents move from experimentation into real-world deployment, a new challenge is emerging just as quickly as the opportunity. While agentic systems promise to automate complex workflows across industries, their ability to act independently also introduces new security, compliance, and reliability risks that existing software tools were never designed to handle. London-based Overmind believes this gap could become one of the biggest barriers to large-scale adoption and has now raised fresh funding to tackle it head-on.

A new layer for agentic AI safety

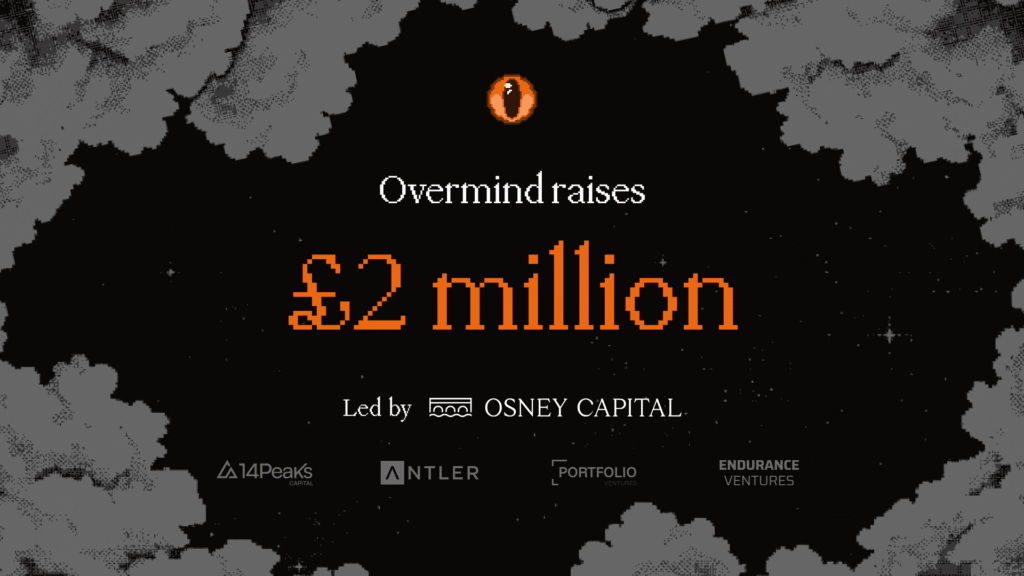

Overmind has closed a £2 million seed funding round to accelerate the development of its supervision platform for AI agents. The round was led by specialist cybersecurity investor Osney Capital, with participation from 14Peaks, Portfolio Ventures, Antler, and Endurance Ventures. The funding reflects growing investor interest in the emerging infrastructure needed to make agentic AI safe and deployable in production environments.

Founded by Tyler Edwards (CEO), Akhat Rakishev (CTO), and Sam Brunt (CRO), Overmind is focused on building a control and supervision layer that sits above AI agents once they are live. Rather than preventing organisations from deploying autonomous systems, the platform is designed to make those deployments safer, more transparent, and easier to manage over time.

The growing risk of autonomous systems

Agentic AI systems differ from traditional software in a fundamental way. Once deployed, they can make decisions, take actions, and adapt their behaviour without constant human input. While this autonomy unlocks efficiency and scale, it also creates new exposure to adversarial prompts, data poisoning, unexpected feedback loops, and performance drift.

For organisations operating in regulated or high-stakes environments, these risks can quickly outweigh the benefits. Existing security tools tend to focus on static code, networks, or data access, leaving a blind spot when it comes to monitoring how AI agents behave once they are running autonomously.

Overmind’s platform addresses this challenge by giving organisations continuous visibility into agent behaviour. It monitors how agents act in live environments, flags deviations from expected behaviour, and enables intervention when necessary. This approach allows teams to retain control without undermining the autonomy that makes agentic systems valuable in the first place.

Learning from agents in production

Beyond monitoring, Overmind applies reinforcement learning techniques to help improve agent performance and reliability over time. By observing real-world behaviour and outcomes, the system can support ongoing optimisation while reducing the likelihood of repeated errors or unsafe actions.

According to CEO Tyler Edwards, the real challenge with agentic AI is not initial deployment but what happens afterwards. Once agents are operating in production, their behaviour can evolve in ways that are difficult to predict or audit. Overmind is designed to address this problem by providing continuous oversight rather than one-off testing or pre-deployment checks.

This focus on live supervision reflects a broader shift in how companies think about AI governance, moving from static compliance frameworks toward dynamic, operational risk management.

Targeting regulated industries first

With the new funding, Overmind plans to expand its engineering and research teams, deepen product development, and scale go-to-market efforts. The company is initially focusing on regulated sectors such as legal services, healthcare, and fintech, where data protection, compliance, and auditability are essential and where the risks of uncontrolled AI behaviour are particularly high.

These industries are already exploring agentic AI to automate document handling, decision support, and customer interactions, but many deployments remain limited due to concerns around trust and control. Overmind aims to remove that friction by making autonomous systems safer to operate at scale.

Building trust into the AI stack

As agentic AI becomes more capable, the infrastructure around it is rapidly emerging as a critical layer of the technology stack. Overmind’s £2 million seed round highlights a growing recognition that supervision, security, and reliability are not optional extras, but foundational requirements for the next phase of AI adoption.

By positioning itself as a safety and supervision layer rather than another AI agent, Overmind is betting that trust will become the defining factor in how quickly autonomous systems move from pilots to widespread production use.